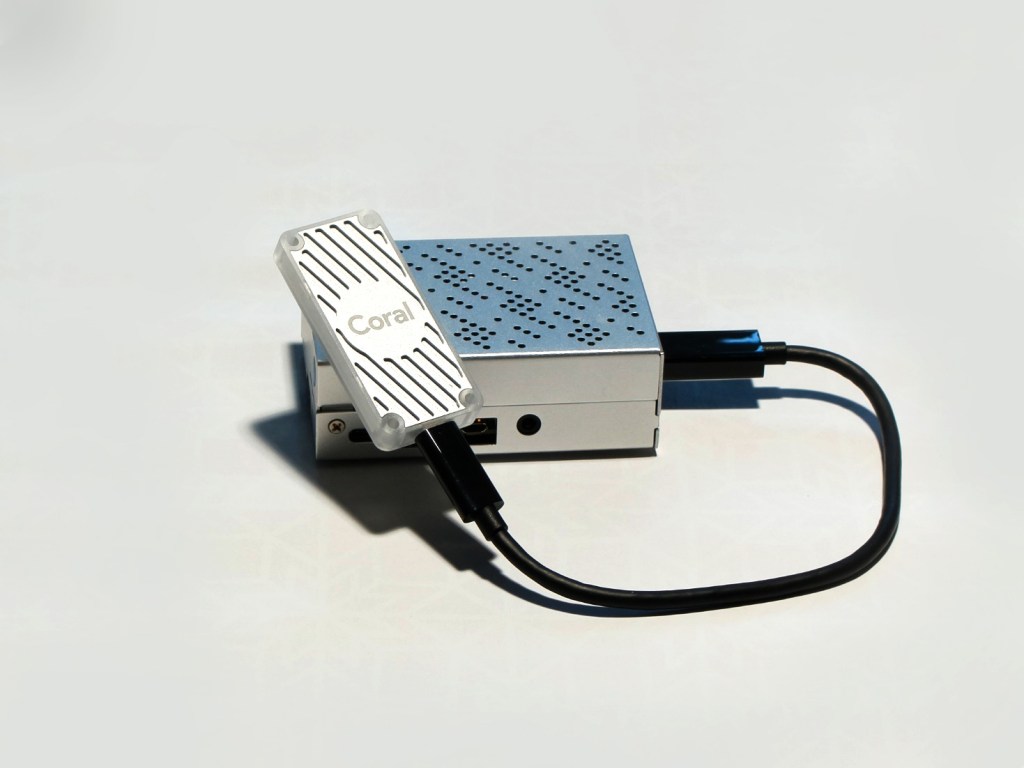

The Coral USB Accelerator from Google is a tiny Edge TPU coprocessor optimised to run TensorFlow Lite, adding powerful AI capabilities to many different host systems, including Raspberry Pi. This guide shows how to easily attach, configure and test the Coral to run super-fast Machine Learning projects using a Raspberry Pi.

The 65mm x 30mm board comes supplied with a USB 3.0 Type-C cable for attaching to the host and is compatible with Linux, Mac OS and Windows 10. We tested using the Raspberry Pi 4 Model B kit.

Using one of the many pre-built TensorFlow examples provided by Google, we configured Coral to identify images of birds with incredible accuracy. The example model is trained to recognise almost 1000 different species of birds and produces astonishingly accurate results.

Example code is written in Python and runs entirely on the host / Coral so it can be used without relying on cloud services, enabling the building of dedicated AI applications that don’t require internet access to run.

Coral comes with excellent documentation and working example projects covering image recognition, object tracking with video, human pose, facial and key-phrase detection so you can learn to build your own machine learning models for specific purposes.

Flash Raspberry Pi OS

Using an 8GB or larger SD card, flash the latest version of Raspberry Pi OS Lite. Full details of how to do this are available on the Raspberry Pi download page: https://www.raspberrypi.org/software/operating-systems/

Enable remote access using ssh.

- After flashing the SD card, remove it from the host PC card reader and then re-insert it.

- Create an empty file named ssh in the boot drive of the SD card using a file manager. The file must have no extension.

- Eject the SD card from the host PC and insert into the Raspberry Pi

- Connect the Raspberry Pi to your network using Ethernet.

- Power up the Raspberry Pi without the Coral attached and allow 2 mins for setup to complete.

When the Raspberry Pi has booted, open a command window (Terminal) on your host PC and login using ssh with the default username pi and password raspberry.

If the Raspberry Pi hostname is not resolved try using ssh pi@raspberrypi.local or check your router’s admin page and use the pi’s IP address – ssh pi@XXX.XXX.XXX.XXX

Install Python dependencies

Coral examples use Python so several libraries need installing. Before doing this make sure that Raspberry Pi OS is up to date:

sudo apt update && sudo apt upgradeInstall the Edge TPU runtime

Google provide optimised Python packages for Coral – these are in a separate repository which must be added to the system:

echo "deb https://packages.cloud.google.com/apt coral-edgetpu-stable main" | sudo tee /etc/apt/sources.list.d/coral-edgetpu.list

Add the key signature:

curl https://packages.cloud.google.com/apt/doc/apt-key.gpg | sudo apt-key add -Update the system:

sudo apt updateInstall the Edge TPU runtime:

sudo apt-get install libedgetpu1-stdConnect Coral USB Accelerator

Now it’s time to connect your Coral using the USB-C cable supplied. This conforms to USB 3.0 standards and should be attached to one of the Blue USB ports on the Raspberry Pi to allow the fastest transfer speeds.

If you already attached the Coral before this step, detach and reattach it.

Install TensorFlow Lite

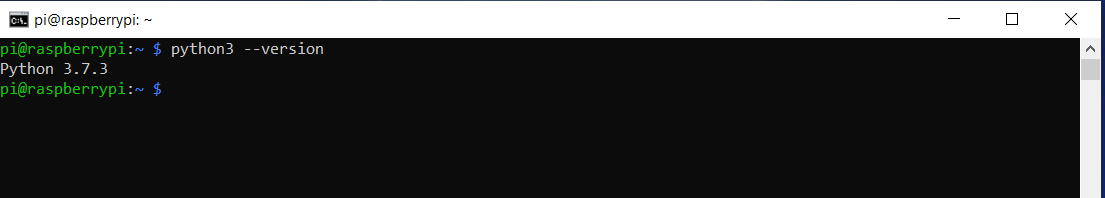

Several different TensorFlow Lite libraries are provided for Coral depending on the platform and Python version. If you are using the standard Raspberry Pi OS this is Linux ARM 32 and Python version 3.7 (at time of writing)

Check the Python version using the following command:

python3 –-version

Go to the TensorFlow Python Quickstart page https://www.tensorflow.org/lite/guide/python

Install the correct version for your OS – our system was Linux ARM 32 / Python 3.7

Make sure to check to see if newer versions are available

Now your system is configured and Coral correctly installed you can run a test using one of the proven example models provided for Coral.

Test run

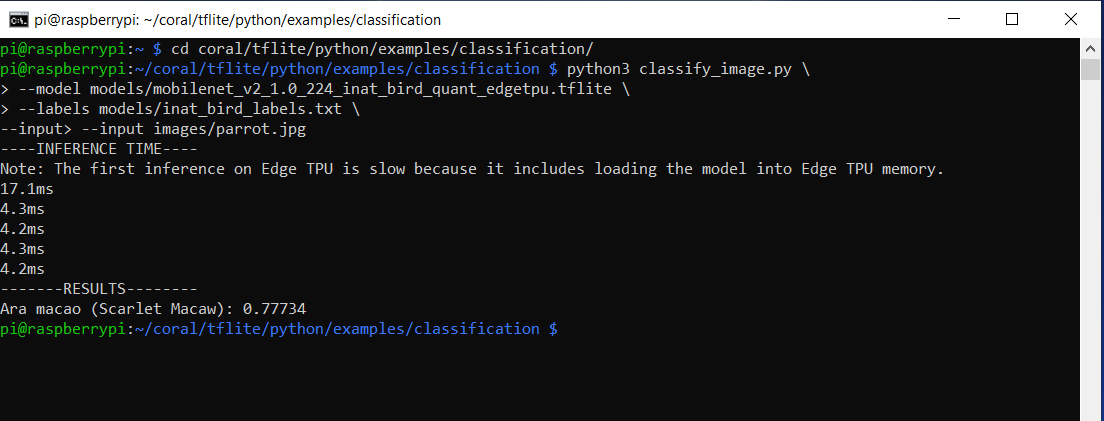

Coral comes with several TensorFlow examples including a classification model which identifies images of different bird species. A sample image of a Scarlet Macaw is provided for testing, which will be recognised.

Install the Coral bird classifier example:

mkdir coral && cd coral

git clone https://github.com/google-coral/tflite.gitDownload the bird classifier model, labels file, and test image:

cd tflite/python/examples/classification

bash install_requirements.shRun the image classifier with the test image:

python3 classify_image.py \

--model models/mobilenet_v2_1.0_224_inat_bird_quant_edgetpu.tflite \

--labels models/inat_bird_labels.txt \

--input images/parrot.jpgCoral will process the image and should recognise the bird as a Scarlet Macaw.

Test image of Scarlet Macaw provided with the example model.

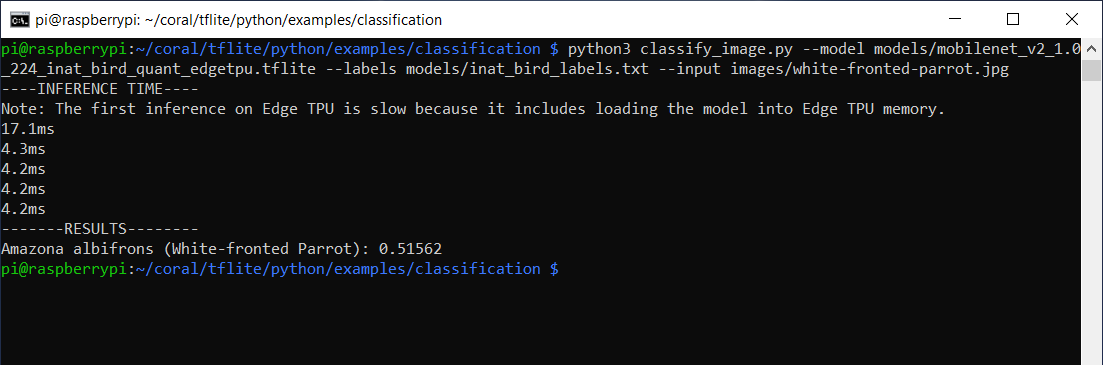

Run further tests

The bird classification model has been trained to recognise almost 1000 different bird species so you should be able to process random images of birds to see if they are recognised. The list of species that the model can recognise is in the file:

/home/pi/coral/tflite/python/examples/classification/models/inat_bird_labels.txt

We checked the label file and downloaded random images of parrots (in the spirit of Python examples) to see if they were recognised.

To test, copy the image to the above image directory then modify the command to point at the chosen image.

We used this shot of a White-fronted amazon (Amazona albifrons)

It was identified:

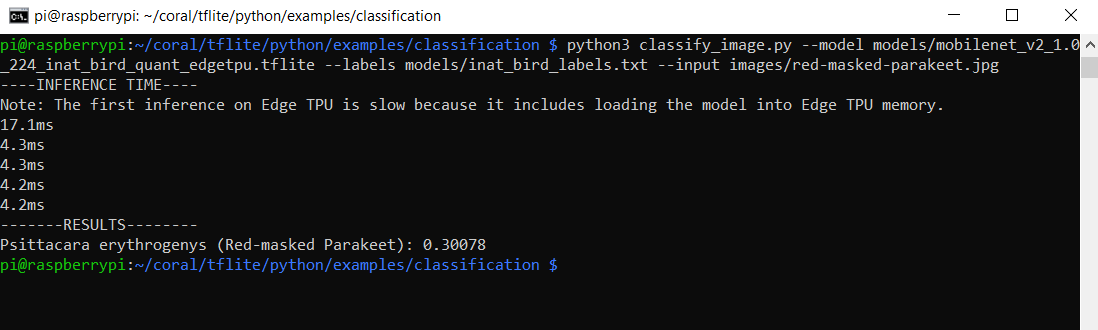

We also used this image of two Red-masked parakeets (Aratinga erythrogenys)

Amazingly this was also recognised, despite the fact that there are two birds in the image! We found very few examples from the trained list that we not recognised correctly.

Summary

After following this guide, you should have added powerful AI capabilities to your Raspberry Pi using the Coral USB Accelerator.

Coral runs TensorFlow Lite models on the Edge without the need for internet connectivity. This makes it ideal for embedding in all kinds of ML applications including image and vision recognition, gesture analysis and voice recognition, giving results with a high degree of accuracy.

Can you tell the difference between a Red-masked and a White-fronted parakeet? Coral can!

For further details visit the Google Coral documentation pages: https://coral.ai/examples/